Letters, Points of Interest, Week in Review, Back Issues, Advertise, Contact, Subscribe/Unsubscribe If, for some reason you cannot read this document, visit: http://www.gismonitor.com/news/newsletter/archive/060503.php

EDITOR'S NOTE I'm still on the lookout for Innovative Organizations-service organizations that possess a unique practice, quality, or vision from which we all can learn. If you know of such an organization, send me an e-mail with the name, contact information and why it is special. Next week I'll tackle open source GIS. Thanks for all the contributions thus far on the topic. If you've not chimed in with an opinion, interesting use, or new product, there's still time. Thanks for your continued support. Adena

EVERYTHING YOU EVER WANTED TO KNOW ABOUT DATA MODELS (BUT WERE AFRAID TO ASK)

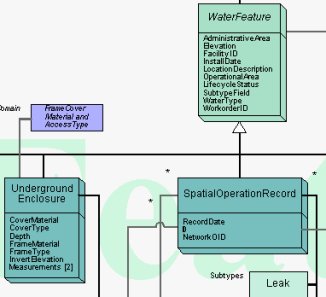

What is a Data Model? As users of geospatial technology, we model the world according to our own specific purposes. We might be interested in land management, site location, water network maintenance, or any one of the topics in the long list of applications for GIS. Each of us chooses how to model our data to fit the type of problem we are trying to solve, whether it be a raster or vector model, or a digital elevation model. Many of us mix and match the models. Some of us require built-in topology, while others do not. Some of us separate the spatial information and the database information. These are all "higher level" data model questions. With GIS now thirty-odd years old, we are looking even more deeply at application-specific data models. Vendors, companies, schools, government agencies, and standards organizations are developing data models aimed at specific disciplines. Put simply, data models are structures into which data is placed. (A structure is a set of objects and the relationships between them.) The model ideally makes the work to be done using the GIS and its data "easier." ESRI puts it this way: "Our basic goals [in developing data models] are to simplify the process of implementing projects and to promote standards within our user communities." Data models can detail everything from field names (e.g., "roads" vs. "streets"), the number of attributes for each geographic feature (e.g., 2 or 200), where network nodes are required, how relationships of connected linear features are indicated, and much more. Some are aimed at a single software platform (like ESRI's data models), while others are more broad, such as the Tri-Service's Spatial Data Standard (SDSFIE) for Facilities, Infrastructure, and Environment. (Tri-Service uses both the terms "standard" and "data model" to describe its products.) Who Creates Data Models? And Why? Data models come from many places. In the early days of GIS software, each implementer, likely working with its software vendor, developed its own model. For many years, some GIS software vendors have shared data models with users, either formally or informally. Some GIS users make their models available to other users. Sometimes industry organizations and government agencies tackle data models. From my research I've found three reasons to develop data models: A GIS user (individual or group) develops a model to meet its organization's needs. A vendor might develop a model and make it available to its customers to help "jumpstart" their implementations. Industry organizations and government agencies craft models with the idea that they will simplify data sharing. There may be some mixed motives across those groups, so those statements may be oversimplified. ESRI and Data Models To get ESRI's take on data models I spoke with Steve Grise, Product Manager for Data Models. One of the things he illustrated when I asked about how standards play into data models was that most data models are built on standards. He pointed to the existing FGDC Cadastral Data Content Standard, which details what information "should be" included in such a GIS layer. That Standard is the basis of the ESRI parcel data model: the ESRI data model basically incorporates all of the definitions included in the FGDC standard. So, as Grise puts it, "They are exactly the same." What ESRI basically did was implement the standard in ArcGIS. Any other vendor or user can do the same. I continued, by asking what benefit there is, if, for example, someone using MapInfo implements a model based on the FGDC Content Standard and someone else, using ESRI software, uses the ESRI implementation of the same standard? Are the two datasets more interoperable? The answer, according to Grise, is that such a model helps. Here's how: Consider that there is actually more than one type of parcel-ownership parcels (What land do I own?), and tax parcels (How does my land get taxed if some is agricultural and some residential?). The FGDC model details both types, so that hopefully, when two organizations share data, they can share the "right kind" of parcel information. That brings up the annoying truth that nearly every local government calls its parcels something different - even if they are actually ownership parcels or tax parcels! That problem is something that organizations are addressing in their work on semantic (or information) interoperability. There is no magic here, but the solution is rather elegant. If there is a data content standard, (for example, the FGDC version that lays out a listing of all the different kinds of data one might have in that application area), it can be turned into a "reference schema," a "generic set of names" for the features. Then, each town can "map" its data names to the ones in the reference schema. If two towns have mapped to the "reference schema," then they can map to each other. This is something like the associative property of field names: if a=b and b=c, then a=c. According to Grise, data models basically "simplify project implementation." Instead of everyone have to go back to the "deep philosophical roots" of how parcel data can be defined, GIS users can just get started knowing they are building on what's been learned in the past. The history of ESRI's data model initiatives date back some six to eight years according to Grise. In a typical ESRI-user fashion, a well-put-together model for water utilities from Glendale, California, built on the version of ArcInfo that was current at the time, started to make the rounds to other users. That was the basis of the first formal ESRI data model, developed for water utilities. The project grew from there and now models-in various states of completion-are available for 21 application areas.

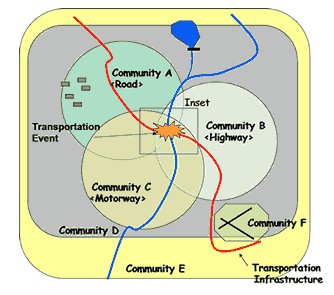

I asked Grise to sketch out how users take advantage of a data model in a project. The data model, which can be thought of as a long laundry list of potential objects and attributes for a particular use of GIS. ESRI's models are available in poster form-basically lots of arrows and boxes and lists of attributes. The team-ideally both those who will develop the model and those who will use it-work together to scratch out the parts that are not needed, and to add in those that are missing. I asked Grise if the model could be thought of as a type of "straw man." He replied "It's far easier to start with the front of the poster than the backside [the blank side]." Next, the technical folks take the data model into the "back room" and perform the magic that transforms the data model from its generic form to the one illustrated in the marked up version. This version can then be loaded into ArcGIS, and small set of data can be loaded in as a prototype. Then, both data creators and end-users can review the data in the model to see if they are on track. An aside: ESRI has created tools so that once a geodatabase is created, users can print out their own poster of their data model. That is useful for explaining the model, but can also be used to "check" the created model against the marked-up version. There are also tools to extract, copy, and paste objects and tables from one data model into another. We finished up our conversation by returning to standards. Grise suggests that the GIS community is still working to determine which standards are most important to address first. He points out that we are addressing content standards (FGDC), methods to store geometry (ISO), methods to encode data (ISO) and tools to enable Web Services (OGC and other organizations that deal with XML and SOAP). He suggests that we need to keep an eye on the problems that end-users are trying to solve and to try to approach those first. He suggests that the data content standards do have a direct impact, for example, while others are a bit out "in front" of potential users. Intergraph and Data Models Data models may seem like a "hot topic" these days, but David Holmes, director of worldwide product strategy, Intergraph Mapping and Geospatial Solutions, makes it clear that the company has been developing such models for 15 years, since MGE and FRAMME came on the scene. Intergraph has models for utilities and communications, local government, transportation, cartography, geospatial intelligence, and others. Holmes and his colleagues Brimmer Sherman (vice president, Utilities & Communications), and Kecia Pierce, (industry consultant, Utilities & Communications), suggested a few reasons the topic seems to be "in the news" just now. The database focus of GIS (the trend toward storing both the spatial and non-spatial data in a standard database) may have helped rekindle interest, according to Sherman. The new connections with IT departments, which have always been active data modelers, may be a factor, too. Finally, the three suggested that the new players in the GIS market may just now realize the return on data models. It costs clients quite a lot of time/money for users to build from scratch, rather than beginning with a well-thought-out model. What is new in the past few years, Holmes notes, is that the amount of intelligence in the data model is increasing. Gone are the "simple" points, lines, and polygons. Now the "data" holds information about objects' connectivity, symbology, and other properties. One key part of change - data models can now help prompt for correct data creation. So, for example, if a user wants to terminate a water line, the software will prompt the user with appropriate ways to do that, listing only the terminators that make sense in that situation. That type of smart data model helps limit errors in data creation - assuming the model is built correctly. In the past, that type of intelligence was often held in the application software, or in the designer's head. I'll offer that this change may be part of the "data model renaissance" - now there is far more that can be modeled in the data. Said another way, the development of data models now may have a greater return on investment than in the past. Pierce explained that Intergraph has a range of data model offerings that vary from discipline to discipline. QuickStart packages combine not only a data model, but also attribute definitions, relationships, reporting templates, sample queries, traces, and analysis tools - a sort of data model/application suite. The closer the users stay to the data model provided, she points out, the quicker they are up and running. Holmes explained that these complete packages are far more appropriate for sophisticated, centralized solutions, while sample "data model only" offerings serve the needs of decentralized users that are typically focused on desktop solutions. Over the years Intergraph's sales and implementation teams have tweaked data models and collected and leveraged best practices. Today's models typically cover between 80 to 90 per cent of users' needs. In addition, Intergraph provides tools with its software to tweak the models further. As might be expected, some users make few changes, while others team up with third parties to update data models to their needs. For the largest of customers, it's not uncommon to tap Intergraph itself to craft the required model. My sense, after speaking with the team from Intergraph, is that the goal is to make the data model as applicable to its user as possible. That's why Intergraph's transportation data model provides all six common linear reference system models, for example. Intergraph doesn't view the data model as a key piece of data sharing, that is done via underlying open systems - for example, an open API (application programming interface), and the use of standard databases. Those APIs will also let external systems look at the data model tables and structures. How will the new and existing data models being offered and implemented impact the market? None of the Intergraph group felt a single physical standard that can be implemented would emerge. They noted differences in real world implementations that would preclude using similar physical models, even if the customers used software from the same vendor. Sherman noted that some consultants had developed data models and offered them for sale, with few takers. Similarly, he pointed out, the Tri-Service Data Model, while widely used inside the Tri-Service and federal government, was rarely used in industry. End-users will have different relationships with data models, depending on their roles with the GIS. Those who work on operational tasks (daily updates, for example) will be rather far from the data model. Why? The application software will do the work of understanding the model and presenting it in a way aimed at performing those tasks. The end-user then can focus on the work, not on understanding the complexities below. Those performing more analytic, non-operational work, such as ad-hoc queries and "what if" scenarios, will need a better handle on the data model. The bottom line for both groups is the same, however: the data model is intended to make both sets of tasks easier. The Open GIS Consortium and Data Models To get a non-vendor perspective I spoke with Kurt Buehler, Chief Technical Officer of the Open GIS Consortium. (I am a consultant to that organization.) He made it clear that the Open GIS Consortium is only interested in one type of data models, ones that enhance interoperability. OGC's work on data models dates back to its early years. One of the first outcomes of the Consortium was the development of a Spatial Schema, essentially the spatial data model upon which all others would be built. That model, now ISO 19107 (Spatial Schema) forms the basis of the OpenGIS Simple Feature Specification, the OpenGIS Geography Markup Language Implementation Specification and the Federal Geographic Data Committee's Framework Data Layers, among others.

The FGDC Data Content Standards are of particular interest because they are designed for exchange. And, the OGC, and other organizations have been involved in their deployment. The objectives of the Cadastral Data Content Standard, for example, include providing "common definitions for cadastral information found in public records, which will facilitate the effective use, understanding, and automation of land records," and "to standardize attribute values, which will enhance data sharing," and "to resolve discrepancies � which will minimize duplication within and among those systems." The FGDC models which detail geometry and attribute structures are written in Unified Modeling Language, a language for writing data models. While very useful for people who do that type of work, it's not so valuable for those who implement models (say in a particular vendor's software package) or for any but the most accomplished programmers. To communicate the model effectively for its intended widespread usage, Buehler argues, there needs to be a simpler, easier-to-digest method of delivery. Buehler suggests that Geography Markup Language, GML, is a logical choice for sharing these models. Because it's open, published, and supported by many GIS vendors already, it should enhance uptake and use of the models. Once a model is completed and available-in for example, GML-how is it used? The reference models play two important roles, one for those crafting their own models, and another for those organizations looking to make their data shareable to neighbors, states, commercial entities, and the National Spatial Data Infrastructure. Data model implementers can take the common model and use it as the basis of their own data models, developed for their own purposes. If a transportation organization on a remote island in Puget Sound wants to tweak the FGDC model for its needs - perhaps removing some optional attributes that are not applicable, or including some geometries only used in its system, that is possible. If a vendor wants to use the model as the basis for a version in its data format, that is possible, too. The common data model acts as the starting point for these and other data model creators. And, as you might expect, the "closer" any implementation is to the reference the "easier" it will be to share data down the road. For those who want to make data available for sharing, the data model plays a slightly different role. The data model is the basis for a translation capability, or "layer" (that can be implemented in many different ways), that will allow an organization's currently implemented data model to behave "as if" it is the reference model. Essentially, a layer sits between the current implementation and the rest of the Internet world. That layer makes the underlying model look and act as though it matches the reference data model exactly. And, if the organization looks across the Web at another organization that also implements such a solution, that organization's data will look and act as though it, too, is implementing the reference data model. Of course, behind the curtain, it could be whatever hardware, software, and custom data model the other organization selected to meet its needs. In the United States, the vision down the road might look like this: Each of the 3000 counties hosts its own online GIS. (I chose counties for the purpose of illustration, but these could be built up from smaller or larger civil divisions, of course.) Each county selected a software package and a data model, to best meet its needs. If each county makes its data available "as though" it is in the reference data model, it would be relatively simple to write an application that knits two, or six, or a state, or even a nation's worth of data together in a single application. And since the data is accessed "live," it would be as up-to-date as the data held on the local county servers. Also important, those interested, who have permission, can work with the data, use it, and understand it, without creating a local copy. That should sound suspiciously like the NSDI vision. What's Ahead? This is an active time in the GIS data model world. The FGDC Data Content Standards are well along. So, too, are many of the vendor-offered data models. Will vendor models be widely implemented in user sites? Will the FGDC Standards enhance data sharing in the near future or long term? Is there friction between those trying to best serve their customers and those who envision NSDI? These are questions to ask in the coming months and years. In the meantime, I'd like to gather some input from GIS end users on data models. Have you implemented a vendor-provided data model? How did it go? Did it "jump start" your implementation? What about the FGDC Content Standards? Are end-users following their development? Do you think either vendor-created data models or federal standards will impact your organization? How? Send me a few paragraphs with your experiences and predictions. I'll share some responses next week.

I've never used IM, but understand from those who do that it's a great communication tool. The only challenge: you can only "message" people from your same vendor. Even that's a bit misleading because all of the IM clients I'm aware of are free. Therefore, as with browsers, you could have a half dozen different ones on your computer or mobile device if you so desire. One key question to ponder: Without this "kiss and make up" deal, would the exploration of interoperability have happened? Wired notes that some analysts feel that the linking of the two IM systems was inevitable because of the widespread use of IM in business. Also in the mix, the Federal Communications Commission (FCC), which approved the AOL Time Warner merger, made provisions that AOL make its IM technology interoperable before it offered advanced features such as video streaming on the platform. Since the merger, AOL has lost market share in the IM space, and is asking the FCC to throw out that requirement. For the record, recent stats show AOL's instant-messaging users at 59.2 million in April, compared to MSN's 23.6 million and Yahoo's 19.1 million. Free instant messaging is popular among consumers, but as with most technologies, a professional-grade solution from the likes of Lotus costs more. AOL and Microsoft may be heading into that space, which may have been part of their respective choices to build on industry standards from the Internet Engineering Task Force. The Wired article suggests that interoperable IM will only be available for paying customers, while consumers will still be limited to conversing with those who share a vendor. Why? Free IM clients are sources for significant advertising revenue for both companies. Let's turn now to geospatial technologies. Granted, IM is a communications technology, more along the lines of e-mail (which is very interoperable, thanks to the long published SMTP and POP protocols) or phones. Still, interoperability certainly is not a new idea to those who use GIS data and software. One suggestion above is that business users are demanding interoperability and have the clout to make it happen. I'll suggest that GIS never had that sort of clout in the business world. Today the vast majority of users of GIS are in the public sector. And, that sector seems to be the one funding most of the work toward open standards, at least Web-based standards. A typical list of sponsors for Open GIS Consortium work includes "US Dept. of Defense National Imagery and Mapping Agency (NIMA), US Army Corps of Engineers Topographic Engineering Center (TEC), the Federal Geographic Data Committee (FGDC), NASA, the US Department of Agriculture Natural Resources Conservation Service (USDA-NRCS)." To be fair, more potentially lucrative business areas pull in industry sponsors. The recent OGC Location Services Initiative sponsors include Oracle, IBM, Microsoft, Webraska, Yeoman, Intergraph, ESRI, CTIA, In-Q-Tel, MapInfo, Autodesk, Siemens and Hutchison 3G. I'm not exactly sure about what that says about who drives standards, but it is interesting to look at how those funded by government compare to those funded by industry. Another interesting twist to the AOL/Microsoft interoperability play is the idea, suggested by Wired, that paying customers will have interoperability, while "free" users will not. I suppose there is some level of that now in the GIS marketplace. More expensive, more powerful products typically can read and write more formats, while free ones typically read only one or two. Some vendors do not provide free viewers for their data formats at all. As demand or interoperability grows, I wonder if GIS vendors will provide support for it only in higher-priced products? One other aspect to the AOL/Microsoft agreement is a vision for exploring digital media. The interesting part of that deal is that Time Warner is in the content business, and, according to an eWeek editorial by Jason Brooks, "AOL isn't a technology company, it's a service provider." Microsoft really isn't a true content provider (yet), but it certainly is a technology company and one that just might be on the cutting edge of the digital delivery business. That type of "compromise" deal, one focusing on the strength/weakness pairings of the two players, makes me think of the ESRI and Bentley interoperability plan. ESRI is not an AEC company and Bentley is not really a GIS company. Each can ideally grow its market share, but not threaten its core business. Microsoft and AOL may have the same idea. What about technical feasibility? Maurene Caplan Grey, messaging analyst at Gartner Inc., in an AP article, makes it clear that IM interoperability is not a technology issue, but is rather limited "by the providers' refusal to cede control over their users." There are those who make the same argument about the success of interoperability efforts in the geospatial community. What about smaller players "ganging up" on the market leader? In 2000, MSN, Yahoo, and then-hot-but-now-extinct ExciteAtHome and Prodigy formed a coalition called IMUnified. The idea was to form a powerful group that would have interoperable systems. That in turn would force the big player, AOL, to join the party. (AOL was not invited to join IMUnified at its inception.) AOL at that time actually submitted a proposed standard to the Internet Engineering Task Force and tested out some solutions, but argued that none met its security and other requirements. IMUnified disbanded not long after that work. Finally, remember that GIS technology is heading toward its 40th birthday. Web GIS dates back eight or more years, depending on the definition. It's certainly hard to retrofit standards to technology that is widely used and mature. Instant messaging is younger, I'd guess less than three or four years. While I don't think I can make any definitive statements, it sure seems that it would be easier to stuff a younger genie into a bottle than an older one.

"Interest for GIS at the University of Tuzla, Faculty of Mining, Geology and Civil Engineering, Bosnia and Herzegovina, is very high. Academic staff is trying to penetrate in modern technologies that would enable GIS utilization in the range of fields covered by geology, mining, civil engineering and environment. The situation in the state reflects to the limited University funds and this disable students' participation in GIS education. If you see any opportunity in this issue (software, hardware, publications), please contact Dr. Tihomir Knezicek, professor at the Faculty of Mining, Geology and Civil Engineering Tuzla. " � Cliff Mugnier from Louisiana State University provides some history and ideas about mapping shoreline raised by my mention of a question regarding the topic at the recent URISA Summit. "Boy, I sure am glad I didn't attend the URISA meeting you just reported. "'Question (from a NOAA employee): We create a shoreline, and USGS has a different shoreline. Congress wants one shoreline. How do we fix this?' "'Reply (from Michael Domaratz, representing the USGS National Map): The agencies are working on it, but that it might get worse as more local shoreline data is brought into play.' "Apparently these people are oblivious to the fact that USGS is a derivative mapping agency for shorelines and only the National Ocean Survey/National Geodetic Survey has the 1806 Congressional Charter to map the nation's shoreline. If anyone disagrees with a NOAA chart for the legal coastline of the United States, they are wrong. Only NOAA can define the waterline/shoreline and only NOAA defines the territorial waters of the United States. If The National Map disagrees with NOAA, The National Map is wrong. It remains to be seen if anyone in the USGS has the grit to correct their database to reflect the "real" shoreline. It remains to be seen if anyone in the USGS has the grit to acknowledge that they must follow NOAA's lead."

POINTS OF INTEREST Farmers and Rocket Scientists Partner. USDA and NASA have a new partnership aimed at bringing remote sensing to precision agriculture. The first step is a $1 million program to establish Geospatial Extension Programs at land grant universities. According to the deal, USDA can call upon NASA's know-how and data, and the two organizations will explore water cycles, invasive species, weather and changes in the atmosphere among other topics. Another bonus: this might bring more geospatial technologies to those land grant institutions, many of which already have strong geography traditions. New Location-based Uses for Cell Phones (with patents). I've reported on at least one solution that uses the concentration of cell phones on the highway to measure traffic. But a solution from Israel actually times signals using the data. An inventor working for IBM has a service that will tell you the posted speed limit on the road you on which you are driving. It will also provide an alarm if you exceed the limit. A patent was awarded for turning a phone into a type of heart monitor. Another spatially-related offering: a system that basically "turns off" cell phones, beepers and other wireless devices-ideally to keep a theatre quiet or aid in a police investigation. Researchers Restricted in 9/11 Work. Several Cornell professors recently studied the 9/11 attacks with the goal of providing insight to engineers and others to prevent future tragedies. Although the researchers had hoped to gain access to maps of the underground infrastructure, "city officials and utility companies considered such information too sensitive to distribute." The team did not receive clearance to publish some of its results. Commenting on GIS restrictions post 9/11, researcher Thomas O'Rourke says, "My concern is that we don't replace what should be an age of prudence with an age of paranoia." I Want My Local Weather. Technology Review describes the new market place providing personalized weather. For example, instead of using the 12 km X 12 km weather data provided by the National Weather Service, Paul Douglas started Digital Cyclone, which predicts weather events over 6 km X 6 km areas and offers the information over mobile phones. This four-year-old company in Minneapolis is one of many serving those who want very local weather. Coming soon, location-based weather, which uses locational information from a GPS-enabled phone to provide the location of interest. (AccuWeather in State College, Pennsylvania, generates one-kilometer-resolution weather maps available via PDA. As a Penn Stater I have to mention that one of every four meteorologists in the U.S. studies at Penn State.) Return on Investment. Roto-Router is showing a 33% increase in productivity, using GPS-enabled phones to track and task its plumbers. The company uses slim, cheap, rugged, no-fuss Motorola phones. "For a plumber, it really doesn't have to be sexy," says Steve Poppe, Roto-Rooter's chief information officer, "It just has to work." Comparing LBS Offerings. An article in the India's Financial Express details the differences in the two companies offering location-based services in Delhi. As someone in the business, I found it very tough to decipher which I might prefer. I wonder if this will turn away potential customers to both services? Focusing Sound. What if you could focus sound the way lasers focus light? Then only those in the path of the sound could hear it, ideally without the din of other local noises. That's what American Technology Corp. (ATC) has developed, in what it calls HyperSonic Sound (HSS). The ultimate product, probably some years away, could provide very localized sound for things like video games or messaging. News for the Lazy. Reader Laurence Seeff sent on this link to Newsquakes, "news for the lazy." It's a geocoded news page with news highlighted on a map. A mouse-over provides the headline, and a click takes you to the actual article. The day I visited, news sources included the BBC, Wired and a few news services. The one oddity in my quick look, all the news from Canada was geocoded to what looks to me to be British Columbia. Streisand and Aerial Imaging. I've written about Kenneth Adelman's California Coastal Records Project website, californiacoastline.org before. This week reader Derek Bedarf sent a link to an article reporting on a lawsuit filed against Adelman and other parties by Barbara Streisand for collecting and distributing images of her home. Adelman and his wife use their own helicopter and camera to create an image library of the California coast. The suit cites past concerns about stalkers and argues that the images provide information for the public to get into Streisand's property. Says Adelman, "The photographs were taken in a public place where she doesn't have a reasonable expectation of privacy."Shelby County Makes a Choice. Shelby County, Indiana, needed to update its GIS and find a new host. First, it approached the organization currently hosting the system and received a quote of $8,000 for the software and $29,000 for the enhancements. The GIS administrator then asked Dave Schoen, a Ball State University professor who, with his students, created the GIS for the County and the City of Shelbyville. That quote was $245 for the software and about $18,000 for the enhancements. The existing application uses MapGuide. Quote of the Week. Chevy Chase gave the commencement address at Pomfret School in Norwich, Connecticut. From an article detailing his talk: "He drove in his Mercedes using an automated map-finder that told him to prepare to turn where an old barn used to be and to remove the casserole from his oven and place it in the glove compartment."

� Announcements

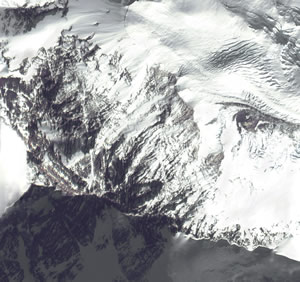

On May 29, 1953, Edmund Hillary and Tenzing Norgay were the first men to ever reach the summit of the world's tallest mountain. Since then, approximately 1,600 men and women have made the daring journey to the top of the 29,03-foot mountain. Space Imaging provided the image at left. European Space Imaging announced that the company has signed a framework contract with the European Community, titled "Supply of Satellite Remote Sensing Data to European Institutes Services." Under this framework contract, European Space Imaging will provide high-resolution global IKONOS imagery for operational and research activities of the European Commission, especially within the institutes of the Joint Research Centre (JRC) in Ispra, Italy. The contract will run for 2 years with the possibility of a successive extension of another year. GRW Aerial Surveys, Inc., headquartered in Lexington, Kentucky, has entered the LiDAR market. After considerable research, GRW recently completed the acquisition of an Optech ALTM 33 kHz laser system. MapInfo announced joint marketing and development agreements with Wi-Fi companies Ekahau and Bridgewater Systems, to deliver a complete, end-to-end Wi-Fi location management solution. MapInfo offers the ability to accurately map and visualize Wireless LAN (WLAN) networks that are near a given customer, near existing serving facilities, or for planned build-out of WLANs tied to existing enterprise networks. TomTom, a provider of personal navigation systems, announced its presence in the United States with the launch of TomTom Navigator for Pocket PCs. TomTom Navigator will be available from major retailers beginning in June 2003, and will include the TomTom Navigator software, cabling, wired GPS receiver, mounting kit, and maps of the entire United States. Pinpoint Incorporated was awarded a US patent for a location-enhanced information delivery system. The patent relates to areas known as implicit or passive personalization, user and object profiling, and customized predictive content delivery. Patent No. 6,571,279, issued May 27 by the U.S. Patent and Trademark Office, covers a system by which personalized recommendations are made to users at their locations as determined by a location-information system such as GPS.

A consortium made up of Infoterra Ltd and SERCO has been chosen by Ordnance Survey to provide a hosted and managed application for the provision of the OS MasterMap imagery layer (right). The e-commerce site allows users to view aerial photography, select the area they wish to purchase (using various capture mechanisms), and then automatically receive the data via FTP, CD or DVD, depending upon the requested format. Research Systems, Inc. (RSI) announced that it plans to release IDL version 6.0 in July 2003. This major product release offers significant enhancements in the areas of flexibility and ease-of-use. Municipal Solutions Group, Inc. announced that the company is listed at No. 83, moving up from last year's No. 94 position, on Profit Magazine's List of Top 100 fastest-growing companies in Canada. Spatial Insights will act as a reseller for Sammamish Data Systems, and is offering its 5-digit ZIP Code boundaries, ZIP+2 and Carrier Route boundaries, and ZIP+4 centroids. In a recent meeting with Thailand's Prime Minister Pol. Lt. Col. Thaksin Shinawatra, ESRI President Jack Dangermond discussed the role of geographic information system (GIS) technology in aiding the Thai government in accomplishing its long-term goals. In his meeting with the Prime Minister, as well as subsequent meetings with various officials and organizations involved in the establishment of a Thai National Spatial Data Infrastructure (NSDI), Dangermond expressed his views on the need for data sharing and interoperability between government agencies, as well as the development of updating strategies. In support of education in Thailand, Dangermond recently provided 100 K-12 GIS kits to the country's Ideal Schools program. The kits include GIS software, a community teaching guide, and student handbooks.

� Contracts and Sales DigitalGlobe announced that Bowles Farming, a 12,000-acre family farm in Northern California, is using the company's satellite imagery for several precision farming applications. The farm estimates a savings of $69,000 on materials alone in solving an irrigation problem. The anticipated revenue increase on the yield is expected to be $17,150 after the first year. Calgary-based CSI Wireless, a maker of wireless phones and global positioning system devices, announced a $2-million deal to supply stolen-vehicle recovery products to a Florida company. GE Network Solutions recently signed an agreement with Zheijiang Provincial Electric Power Company (ZPEPC) in China to optimize its energy distribution and transmission infrastructure. Merrick & Company announced a $1.1 million contract with Jones Edmunds & Associates to collect LiDAR and color digital orthophotography for Marion County, Florida. The Medina County (Texas) Appraisal District has contracted with Houston-based Stewart Geo Technologies to provide orthophotography and mapping services for both the appraisal district and the county's 911 Emergency Communications District.

� Products ESRI Business Information Solutions (ESRI BIS) launched the first phase of the private labeling and hosting functionalities of its website. New features and website innovations provide clients and partners with flexible options for increased accessibility to demographics and spending pattern data, reports, and maps from ESRI BIS, in addition to aerial photography and satellite imagery from Pixxures, Inc.

Ultimate Earth is a large and complex dataset that works with either World Construction Set 6 or Visual Nature Studio 2. The Ultimate Earth Content DVD-ROM provides 1 kilometer land mass terrain data, 10 kilometer bathymetry, 1 kilometer color imagery, and 5 kilometer cloudmap data for the entire globe. Included are more than 939 million DEM data points and more than one billion pixels of imagery. That's Hawaii at left. PCI Geomatics announced that new rigorous model support for EROS 1.8m satellite panchromatic data is included within the company's new Geomatica 9 software solution. Leica Geosystems GIS & Mapping, LLC., announced notable performance improvements made to the Leica ALS50 Airborne Laser Scanner. The changes represent a 10 percent improvement in point density over currently available competing systems and reduce the minimum flying height levels typical to helicopter operation in corridor mapping applications. Visual Nature Studio 2 now supports Earth Resource Mapping's Enhanced Compression Wavelet (ECW) technology. Navigation Technologies now offers digital map coverage in Singapore and neighboring Johor Bahru, Malaysia. Webraska launched the SmartZone Navigation Suite. Based on Webraska's technology, the Suite comprises on-the-shelf applications and software development kits (SDKs) for the development and deployment of wireless, turn-by-turn navigation applications. CARIS released Spatial Fusion version 3.1. The new version includes out-of-the-box support for version 1.1.1 of the OpenGIS Web Map Service (WMS) Implementation Specification; Point of Interest (POI) support to allow the easy addition of POIs; support for DGN files and Marine format (S-57) enhancements; and new logging capabilities to provide additional feedback from the Fusion data services. � Hires and New Offices Corbley Communications Inc., a public relations and advertising firm serving the international geotechnologies industry, has opened its office in Fort Collins, Colorado. � Events

ADVERTISE WITH US

CONTACT

SUBSCRIBE/UNSUBSCRIBE |